How AI Can Read Your Thoughts: The Revolutionary Science of Neural Decoding and Mind-Reading Technology

The Age of AI-Powered Mind Reading

For centuries, the contents of the human mind have remained the ultimate private frontier. Our thoughts, memories, desires, and inner dialogues have been shielded from the outside world by the impenetrable vault of our skulls. No matter how advanced surveillance technology became, what happened inside your brain stayed inside your brain.

That era may be coming to an end.

Thanks to rapid breakthroughs in artificial intelligence and neuroscience, researchers around the world are developing systems that can effectively read your thoughts. These AI-powered tools analyze patterns of brain activity and translate them into coherent language, vivid images, and even reconstructed video sequences. What once belonged to the realm of science fiction is now emerging as scientific fact — and it's advancing faster than almost anyone predicted.

From helping paralyzed patients communicate to raising profound questions about mental privacy, the convergence of AI and brain science represents one of the most transformative — and potentially disruptive — technological developments of our time.

What Is Neural Decoding? Understanding the Science Behind AI Mind Reading

The Basics of Neural Decoding

Neural decoding is the process of interpreting brain activity patterns to determine what a person is thinking, seeing, hearing, or imagining. Every thought you have, every image you visualize, and every word you silently speak generates unique patterns of electrical and metabolic activity across billions of neurons in your brain.

Scientists have long known that these patterns exist. The challenge has always been reading them accurately and translating them into something meaningful. This is where artificial intelligence enters the picture.

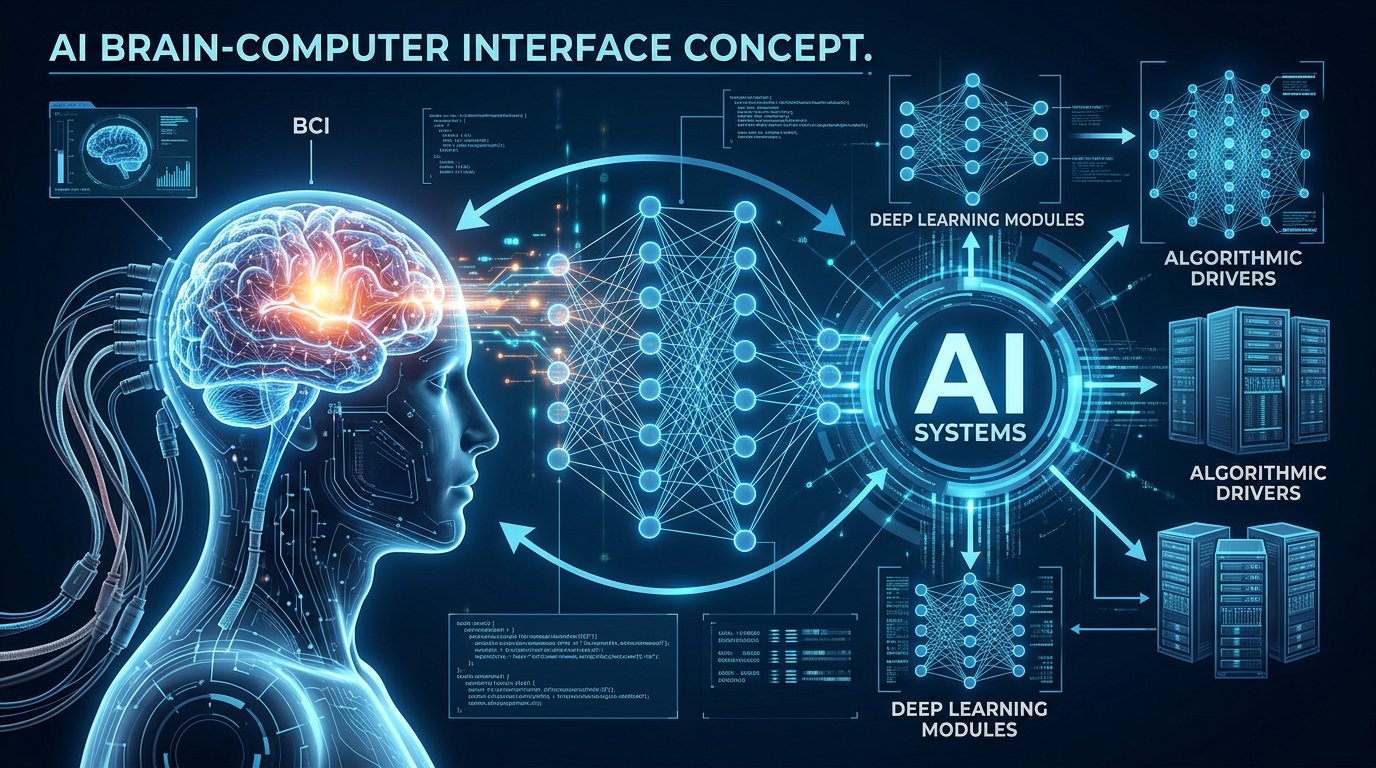

Modern AI systems — particularly deep learning models and large language models (LLMs) — excel at identifying complex patterns in enormous datasets. When trained on brain imaging data, these algorithms can learn to associate specific neural activity patterns with specific thoughts, words, or visual experiences.

How Brain Imaging Technologies Capture Thought

Several brain imaging technologies serve as the foundation for AI-powered thought decoding:

- Functional Magnetic Resonance Imaging (fMRI): Measures changes in blood oxygenation levels across different brain regions. When a brain area is more active, it consumes more oxygen, creating detectable signals. fMRI provides excellent spatial resolution, showing where in the brain activity occurs.

- Electroencephalography (EEG): Records electrical signals from the scalp using external electrodes. EEG offers excellent temporal resolution, capturing the timing of brain activity with millisecond precision, though its spatial resolution is limited.

- Electrocorticography (ECoG): Involves placing electrode arrays directly on the brain's surface during surgery. ECoG provides both good spatial and temporal resolution but requires invasive procedures.

- Implanted Microelectrode Arrays: Tiny electrodes inserted directly into brain tissue to record from individual neurons. These offer the highest resolution but are the most invasive.

Each technology offers different trade-offs between resolution, invasiveness, and practicality — and AI researchers are developing decoding systems for all of them.

Groundbreaking Research: How Scientists Are Teaching AI to Read Minds

Decoding Language from Brain Activity

One of the most remarkable achievements in neural decoding came from researchers at the University of Texas at Austin. In a landmark 2023 study published in Nature Neuroscience, a team led by Alexander Huth and Jerry Tang developed an AI system called a "semantic decoder" that could translate brain activity recorded via fMRI into continuous streams of natural language.

Participants lay in an fMRI scanner and listened to hours of podcast recordings while the system tracked their brain activity. The AI — built on a foundation similar to the GPT family of large language models — learned to map specific brain activity patterns to the meaning of the words being heard.

The results were astonishing. When participants later listened to new stories or even silently imagined telling a story, the decoder could generate text that captured the gist and, in many cases, the specific phrasing of what they were thinking. While the output wasn't a word-for-word transcription, it accurately captured the semantic content — the meaning and intent — of the participant's thoughts.

This was groundbreaking for a crucial reason: it was non-invasive. No surgery was required. The participants simply lay in a scanner, and the AI did the rest.

Translating Thoughts into Speech

At the University of California, San Francisco (UCSF), neuroscientist Edward Chang and his team have made extraordinary strides with implanted brain-computer interfaces. Working with patients who had lost the ability to speak due to conditions like ALS (amyotrophic lateral sclerosis) or severe stroke, the researchers placed electrode arrays on the brain's speech motor cortex.

Their AI system learned to decode the neural signals associated with attempted speech — the brain activity that occurs when a patient tries to talk but physically cannot. The decoder then translated these signals into synthesized speech or text on a screen in real time.

In one remarkable case, a patient who hadn't spoken in over 15 years was able to communicate full sentences through the brain-computer interface, with the AI achieving accuracy rates that made fluid conversation possible.

Reconstructing Visual Experiences

The ability to decode visual experiences from brain activity represents another frontier. Researchers in Japan at Osaka University and teams in the United States and Europe have developed AI systems that can reconstruct images a person is viewing — or even imagining — based solely on brain activity.

Using fMRI data combined with generative AI models (similar to those behind tools like Stable Diffusion and DALL-E), these systems analyze activity in the brain's visual cortex and produce images that closely match what the participant was seeing or visualizing. Early results produced blurry approximations, but recent advances have yielded startlingly accurate reconstructions — capturing colors, shapes, spatial layouts, and even emotional tone.

Some researchers have extended this work to video reconstruction, generating rough but recognizable sequences of moving images decoded from brain activity in real time.

Brain-Computer Interfaces: Giving a Voice to the Voiceless

Medical Applications That Are Changing Lives

The most immediate and compelling application of AI mind-reading technology lies in medicine. For millions of people living with conditions that rob them of the ability to communicate — including ALS, locked-in syndrome, severe spinal cord injuries, and advanced stroke — neural decoding represents nothing short of liberation.

Brain-computer interfaces (BCIs) powered by AI are already being used in clinical settings to help patients:

- Type messages using only their thoughts

- Control robotic limbs and prosthetic devices

- Navigate computer interfaces and access the internet

- Communicate with family members and caregivers in real time

- Operate wheelchairs and other assistive devices through neural commands

Companies like Neuralink (founded by Elon Musk), Synchron, Blackrock Neurotech, and Paradromics are racing to develop commercial BCI systems. Synchron's Stentrode device, which is inserted through a blood vessel rather than requiring open brain surgery, has already been implanted in human patients and allows them to control computers through thought alone.

Neuralink's first human patient, implanted in early 2024, demonstrated the ability to control a computer cursor, play video games, and browse the internet using only neural signals — marking a significant milestone in the field.

The Evolution of Assistive Communication Technology

The progression of assistive communication technology tells a powerful story of innovation:

- 1990s–2000s: Eye-tracking systems allowed paralyzed patients to select letters on a screen by looking at them — slow but revolutionary.

- 2010s: Simple BCI systems decoded basic neural commands (like "move cursor up") with limited speed and accuracy.

- 2020s: AI-enhanced BCIs began decoding attempted speech, imagined handwriting, and complex cognitive intentions at conversational speeds.

- Future: Researchers aim for fully naturalistic, real-time thought-to-speech systems that operate as seamlessly as natural conversation.

The speed of improvement is staggering. Early BCIs allowed communication at perhaps 5-10 words per minute. The latest systems, enhanced by sophisticated AI decoding, are approaching 60-70 words per minute — nearing the pace of natural human speech.

How Does AI Actually Decode Brain Signals?

The Role of Machine Learning and Deep Neural Networks

At the core of AI mind-reading technology are machine learning algorithms, particularly deep neural networks. Here's a simplified explanation of how the process works:

- Data Collection: A participant undergoes brain scanning (fMRI, EEG, or implanted electrodes) while performing specific tasks — listening to stories, viewing images, thinking of words, or attempting to speak.

- Training Phase: The AI model is fed thousands of examples linking specific brain activity patterns to known stimuli or actions. For instance, the system learns that a particular pattern of activity in the auditory cortex corresponds to hearing the word "dog."

- Pattern Recognition: The deep neural network identifies statistical relationships between brain activity patterns and their corresponding meanings. These relationships are often incredibly complex and subtle — far beyond what human researchers could detect manually.

- Decoding/Prediction: When presented with new, unseen brain activity, the trained model predicts what the person is thinking, hearing, seeing, or intending based on the patterns it learned during training.

- Output Generation: The decoded information is translated into text, synthesized speech, or reconstructed images using language models or generative AI systems.

The Importance of Individual Calibration

A critical aspect of current neural decoding technology is that most systems must be individually calibrated. Brain activity patterns vary significantly from person to person — your neural signature for thinking about "a dog running in a park" is different from mine. Therefore, AI decoders typically need hours of training data from each specific individual before they can accurately decode that person's thoughts.

However, researchers are making progress toward cross-subject decoding, where models trained on data from multiple individuals can generalize to new people with minimal calibration. This would dramatically expand the practical applications of the technology.

Ethical Implications: Privacy, Consent, and the Future of Mental Freedom

The Threat to Mental Privacy

As AI mind-reading technology advances, it raises what many ethicists consider the most profound privacy question in human history: Do we have a right to mental privacy?

Throughout all of human civilization, our thoughts have been inherently private. No government, no corporation, no other person could access the contents of our minds without our voluntary disclosure. AI neural decoding technology threatens to change this fundamental aspect of the human condition.

Key concerns include:

- Involuntary thought surveillance: Could governments or employers eventually use neural decoding to monitor people's thoughts without their knowledge or consent?

- Coerced brain scanning: Could authorities compel individuals to undergo brain scans during criminal investigations, effectively creating a "mind search"?

- Commercial exploitation: Could companies use simplified versions of thought-decoding technology to gauge consumers' true reactions to products, advertisements, or political messaging?

- Data security: If brain data is collected and stored, how would it be protected from hackers, breaches, or misuse?

The Call for "Neurorights"

In response to these concerns, a growing movement of scientists, ethicists, and legal scholars is calling for the establishment of "neurorights" — fundamental human rights specifically designed to protect mental privacy and cognitive liberty in the age of neurotechnology.

The NeuroRights Foundation, led by Columbia University neuroscientist Rafael Yuste, has proposed five core neurorights:

- The right to mental privacy — Protection from unauthorized access to neural data

- The right to personal identity — Safeguards against technology that could alter one's sense of self

- The right to free will — Protection from external manipulation of decision-making through neurotechnology

- The right to fair access to mental augmentation — Ensuring neurotechnology benefits are equitably distributed

- The right to protection from algorithmic bias — Preventing discriminatory outcomes from neural data analysis

Chile became the first country in the world to enshrine neurorights into its constitution in 2021, and other nations are considering similar legislation. The European Union has also begun exploring regulatory frameworks for neurotechnology under its broader AI governance initiatives.

Current Safeguards and Limitations

It's important to note that current AI mind-reading technology has significant limitations that provide some natural safeguards:

- Cooperation is required: The most advanced decoders, particularly fMRI-based systems, require the active cooperation of the subject. Studies have shown that participants can effectively "block" the decoder by thinking of unrelated content, counting, or simply letting their minds wander.

- Expensive, bulky equipment: fMRI machines cost millions of dollars, fill entire rooms, and require participants to lie completely still. This is not a technology that can be covertly deployed.

- Individual training needed: As noted above, most systems require extensive calibration for each person, making mass surveillance impractical with current technology.

- Imperfect accuracy: While remarkable, current decoders produce approximations of thoughts, not precise transcriptions. Errors and misinterpretations remain common.

However, technology evolves rapidly. The portable, affordable, and accurate mind-reading devices that seem implausible today could become reality within a decade or two — making proactive ethical and legal frameworks essential.

The Role of Major Tech Companies and Startups

The Corporate Race for Brain-Computer Interfaces

The potential of AI-powered neural decoding has attracted enormous investment from both established tech giants and ambitious startups:

- Neuralink: Elon Musk's company is developing high-bandwidth implanted BCIs with thousands of electrodes. Their first human trials have demonstrated impressive capabilities for cursor control and digital interaction.

- Meta (formerly Facebook): Meta's research labs have invested heavily in non-invasive neural interface technology, aiming to develop wearable devices that could allow users to type or interact with augmented reality using thought alone.

- Synchron: This Australian-American company has developed a less invasive BCI that is inserted through blood vessels, avoiding open brain surgery. It has received FDA approval for human trials in the United States.

- Kernel: Founded by entrepreneur Bryan Johnson, Kernel is developing helmet-like devices that use advanced neuroimaging to measure and decode brain activity non-invasively.

- Apple, Google, and Microsoft: While less publicly visible in BCI research, all three tech giants hold patents and conduct research related to neural interfaces, brain-signal processing, and AI-powered cognition tools.

The competitive landscape suggests that commercial neural decoding products could reach consumers far sooner than many experts anticipated, making regulatory preparedness all the more urgent.

Investment and Market Growth

The global brain-computer interface market is projected to grow from approximately $2 billion in 2024 to over $8-10 billion by 2030, according to multiple market research firms. Investment in neurotechnology startups has surged, with venture capital funding in the sector increasing by more than 300% over the past five years.

This rapid commercialization raises questions about whether safety, ethics, and privacy protections can keep pace with technological deployment.

Future Possibilities: Where AI Mind Reading Is Headed

Near-Term Developments (2025-2030)

In the coming years, experts anticipate several significant milestones:

- Faster, more accurate speech BCIs that allow paralyzed patients to communicate at near-normal conversational speeds

- Improved non-invasive decoding through advances in portable fMRI alternatives, high-density EEG, and functional near-infrared spectroscopy (fNIRS)

- Better visual reconstruction systems capable of producing high-resolution images and video from brain activity

- Emotion and mood decoding for mental health applications, potentially enabling objective diagnosis and monitoring of depression, anxiety, and PTSD

- Wider clinical deployment of FDA-approved BCI devices in hospitals and rehabilitation centers

Long-Term Possibilities (2030 and Beyond)

Looking further ahead, the implications become even more profound:

- Brain-to-brain communication: Direct neural links between individuals, enabling a form of technologically mediated telepathy

- Memory recording and playback: The ability to capture, store, and replay mental experiences

- Enhanced learning: AI systems that can directly interface with the brain to accelerate knowledge acquisition

- Dream decoding: Recording and interpreting the content of dreams

- Cognitive augmentation: Merging AI capabilities with human cognition to enhance memory, processing speed, and creative thinking

While these possibilities remain speculative, the pace of progress suggests that at least some of them may become reality within our lifetimes.

Challenges and Limitations of Current Technology

Technical Hurdles

Despite the extraordinary progress, significant technical challenges remain:

- Signal noise: Brain signals are incredibly noisy, and extracting meaningful information requires sophisticated filtering and processing

- Limited vocabulary: Most speech decoders work with restricted vocabularies or produce approximations rather than exact words

- Physical constraints: fMRI requires absolute stillness, limiting practical applications; EEG provides lower resolution

- Long-term stability: Implanted electrodes can degrade over time as the brain's immune response creates scar tissue around them

- Computational demands: Real-time neural decoding requires enormous computational power, particularly when using deep learning models

Biological Complexity

The human brain contains approximately 86 billion neurons, each forming thousands of connections with other neurons. The resulting network contains hundreds of trillions of synaptic connections — a level of complexity that dwarfs even the most powerful AI systems.

Current decoding technology captures only a tiny fraction of this activity. As our tools for measuring brain activity improve and our AI models become more sophisticated, decoding accuracy will continue to advance — but fully "reading" the brain in all its complexity remains a distant goal.

How to Prepare: What Society Should Do Now

Policy Recommendations

Experts in neurotechnology ethics recommend several proactive measures:

- Establish clear legal frameworks for neural data privacy before the technology becomes widespread

- Classify brain data as sensitive personal information under existing data protection regulations like GDPR

- Require informed consent for any collection or use of neural data

- Prohibit coerced brain scanning in law enforcement, employment, and insurance contexts

- Fund independent research into the societal implications of neurotechnology

- Create international standards and treaties for the ethical development and deployment of mind-reading AI

- Educate the public about both the benefits and risks of neural decoding technology

Individual Awareness

For individuals, staying informed about these developments is crucial. Understanding what the technology can and cannot do helps prevent both unwarranted fear and dangerous complacency. As neural decoding technology moves from research laboratories to commercial products, informed citizens will be better positioned to advocate for appropriate safeguards.

Conclusion: Navigating the Promise and Peril of AI Mind Reading

AI-powered mind-reading technology stands at a remarkable inflection point. On one hand, it offers life-changing benefits for millions of people with communication disabilities, new tools for understanding and treating mental illness, and unprecedented insights into the nature of human consciousness itself. On the other hand, it poses existential questions about privacy, identity, and freedom of thought that humanity has never before had to confront.

The key to navigating this transformative technology lies in balance — embracing the genuine medical and scientific benefits while establishing robust ethical guardrails before the technology outpaces our ability to control it. The thoughts inside our heads may soon be readable by machines. The question is not whether this will happen, but whether we will be ready when it does.

The conversation about AI and mental privacy is no longer theoretical. It is urgent, it is real, and it demands the attention of policymakers, technologists, ethicists, and every individual who values the sanctity of their own mind.

Frequently Asked Questions (FAQ)

Can AI really read your thoughts right now?

AI can decode certain types of brain activity — such as attempted speech, viewed images, and the gist of heard or imagined stories — with impressive accuracy. However, it cannot yet perform precise, word-for-word mind reading in real-world settings.

Is AI mind reading invasive?

It depends on the technology used. Some systems require surgical implants (like Neuralink's device), while others use non-invasive methods like fMRI or EEG. Non-invasive methods are less precise but don't require surgery.

Can someone read my thoughts without my knowledge?

With current technology, no. The most accurate systems require either surgical implants or the participant's active cooperation in a scanner. However, future advances could potentially change this.

What are neurorights?

Neurorights are proposed fundamental human rights designed to protect mental privacy, cognitive liberty, and personal identity in the age of neurotechnology. Chile is the first country to constitutionally enshrine such rights.

Who benefits most from this technology?

Currently, the greatest beneficiaries are patients with severe communication disabilities, such as those with ALS, locked-in syndrome, or paralysis from spinal cord injuries.

Post your opinion

No comments yet.